Time to mention some great updates in the newest versions of DiskDigger, as well as its cousin FileSystemAnalyzer, which is my “internal” tool for tinkering with various file systems.

ZFS

I’ve been embarking on a bit of self-study of the ZFS file system, specifically its on-disk structures, for the purpose of forensic analysis and opportunities for data recovery. DiskDigger (and FileSystemAnalyzer) now supports ZFS partitions on physical disks and disk images. At the moment it can only parse the current disk’s worth of the ZFS pool, i.e. it does not yet fully support pools that span multiple disks, but this will be updated soon.

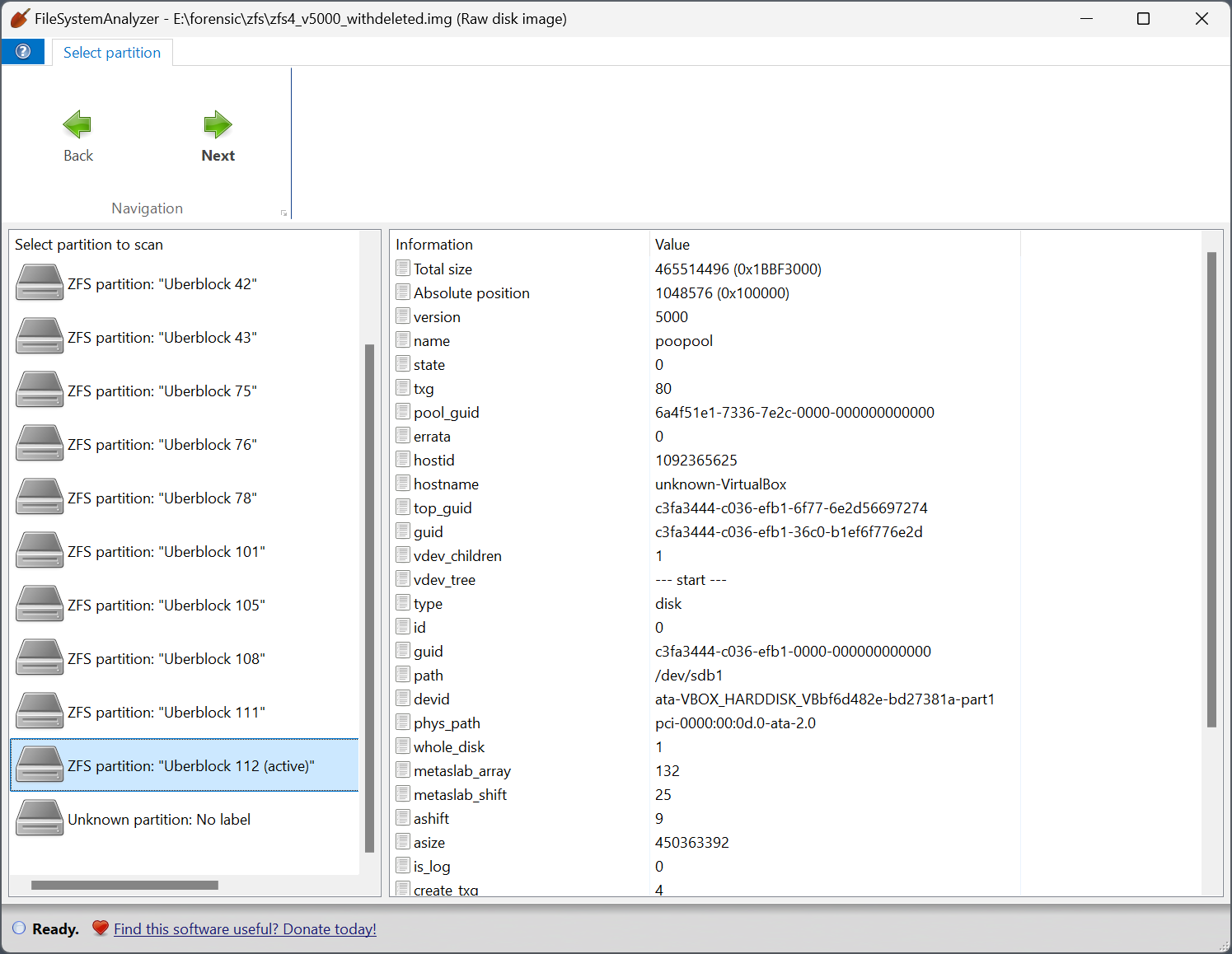

FileSystemAnalyzer now lets you visualize and parse a ZFS partition in a couple of unique ways. First, you can select the uberblock from which to start parsing the file system. ZFS uses a round-robin list of uberblocks, where every new “transaction group” causes a new uberblock to be written or updated, and the uberblock with the highest transaction group number is the “active” one. This implies that “older” uberblocks could potentially point to file system structures that are deleted, or forensically interesting in other ways. FileSystemAnalyzer presents the list of potentially parseable uberblocks as separate “partitions”, which you can select:

I have definitely observed cases where deleting files causes a new “transaction group” to be recorded, which creates a new uberblock; and then parsing from the previous uberblock allows the deleted files to be seen.

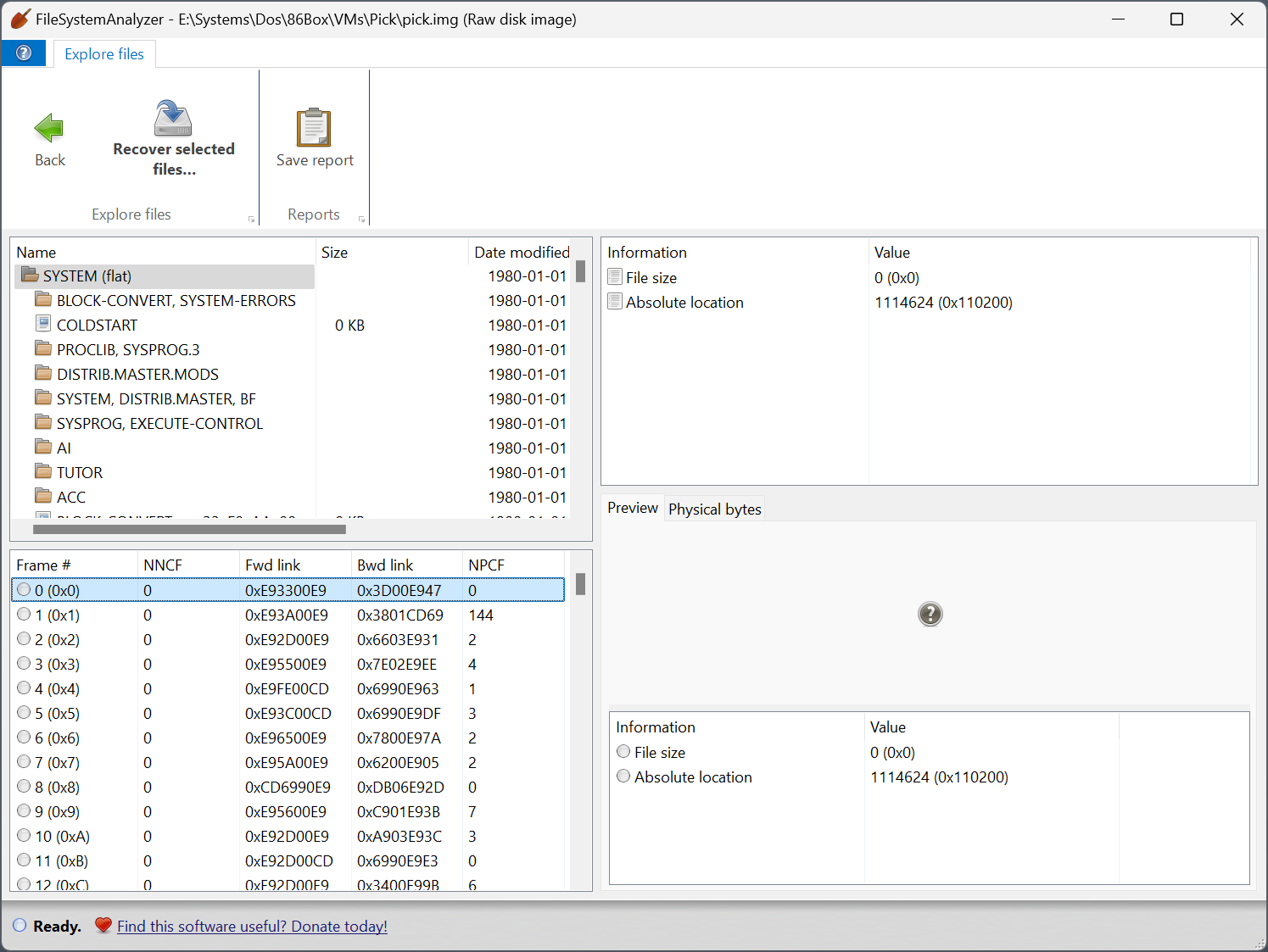

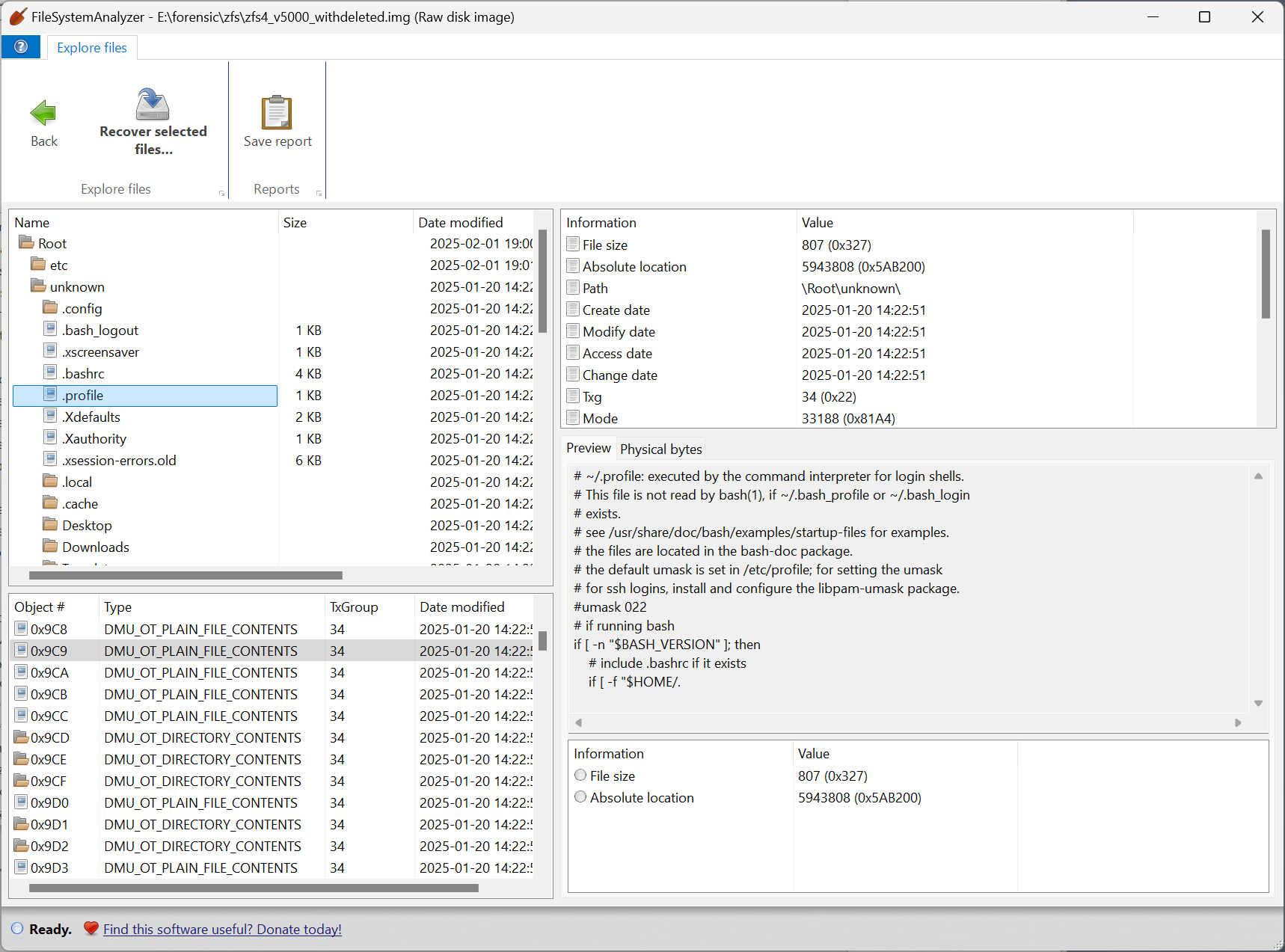

Then when viewing the actual files in the ZFS partition, FileSystemAnalyzer lets you browse the raw “object list”, which is a flat list of objects that represent all the files and directories organized in the file system tree, but might also include items that are not present in the tree.

As an aside, although the design of ZFS is mostly very sound, I’m a bit taken aback by the complexity of certain portions of ZFS. For example, ZFS uses at least four different ways of storing key-value pairs, each for different purposes:

- nvlist, for storing header metadata at the beginning of the file system.

- SA (system attributes), for storing attributes for files and directories. Because ZFS is designed to be maximally versatile and cross-platform, the types of attributes associated with files and folders can be defined dynamically in a system-attribute registry stored in the metadata of the filesystem.

- ZAP: this is the main storage mechanism by which the actual filesystem (files, directories, symlinks, etc) are structured, achieving a B-tree-like structure. However (!) ZAP offers two different ways of structuring it:

- Microzap: When there are few enough entries, the structure becomes completely different, and more compact.

- Fatzap: The actual, full-fledged mechanism for storing the file system tree.

UFS / UFS2 / Minix / Ultrix / Xenix

…Really, all Unix-like file systems. Or, I should say, all inode-based file systems. DiskDigger and FileSystemAnalyzer now have expanded support for more obscure and legacy Unix-like file systems, like Ultrix and Xenix, some of which have different versions that are mutually incompatible. If you have disk images of these types of very old operating systems, send them to me! I’m always looking for obscure stuff to test with.

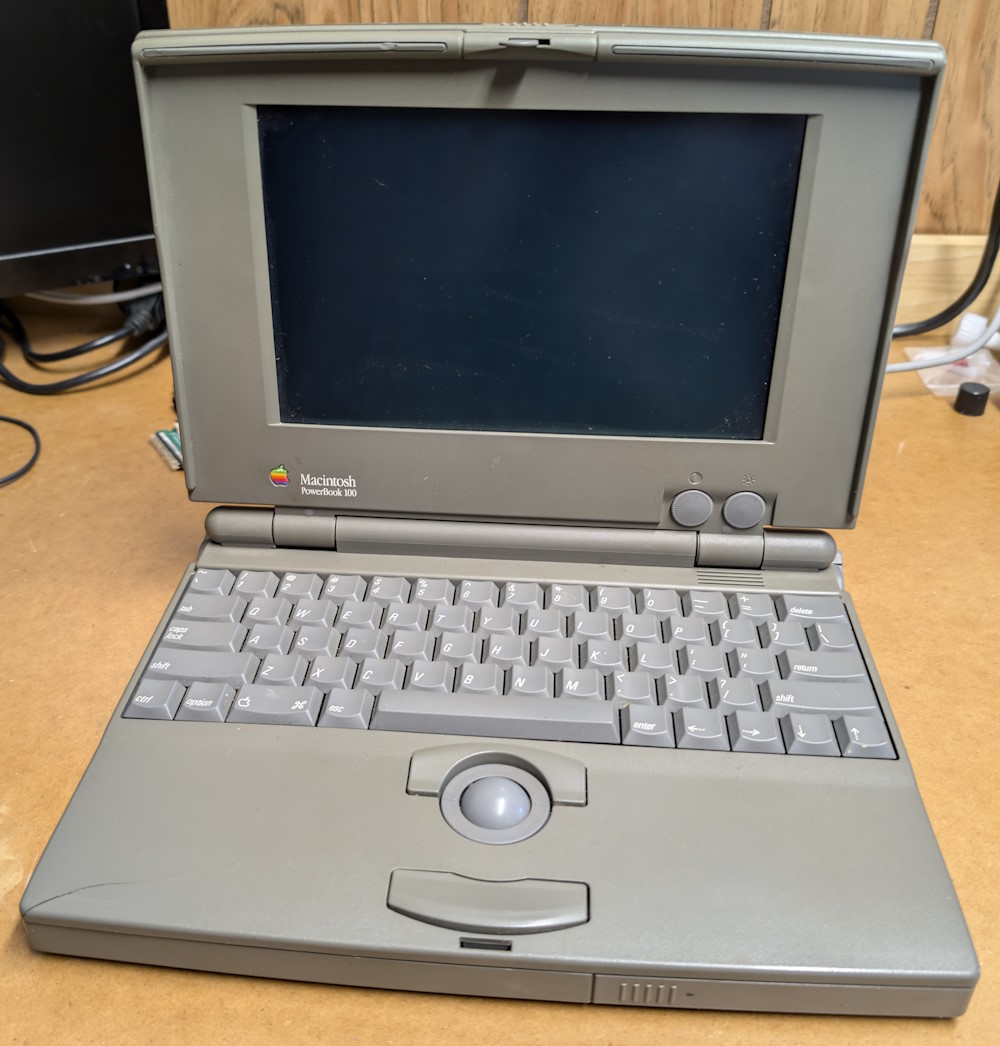

Pick R83

The Pick Operating System, created by a guy whose actual name was Dick Pick, was a super interesting blip in computing history. Everything about this operating system is database-driven, including the file system, if you can call it that. The “account” that a user signs into is really a database, a “file” is really a table in the database, and then each file contains “attributes” and “records” that correspond to columns and rows. The Pick OS found its way into some niche markets, but obviously didn’t last against its larger competitors. It was, however, a bit ahead of its time with its database-centric design, and these ideas are arguably “coming back” in the form of NoSQL.

I’d like to add more meaningful support for Pick partitions in the future, but for now, you can in fact browse a Pick file system in a minimal way, and also see a list of raw on-disk “frames” that make up the Pick database: